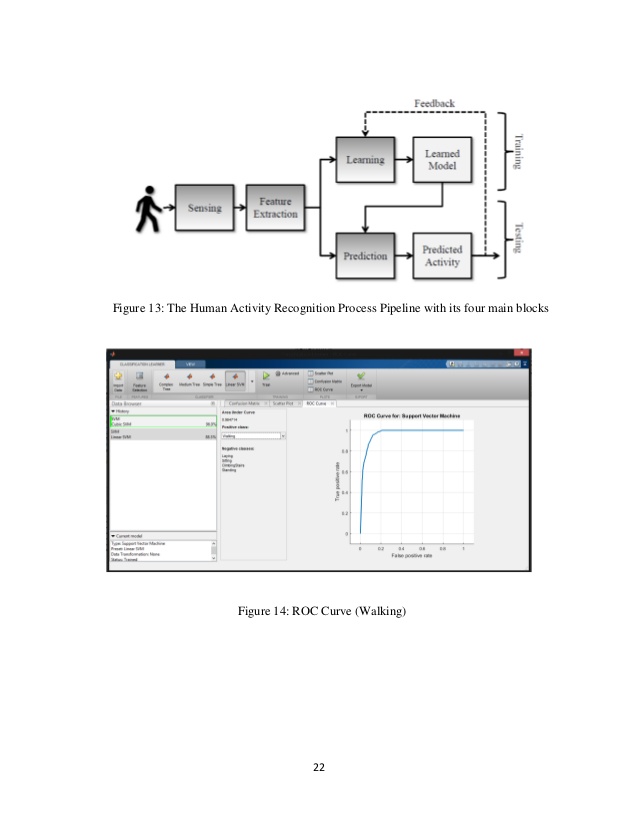

Human Activity Detection Matlab Code

Dot products of the query and all keys are calculated, and then the softmax function is applied to normalize the obtained weights, which are multiplied by values, see Equation (1). The output of the network is the weighted sum of values, where the weight is assigned to each value (V) based on the calculation of the compatibility function from the query (Q) and the corresponding key (K). Multi-head attention is based on the principle of mapping a query and a set of key-value pairs to an output. The attention mechanism is crucial to the transformer model, where each attention head in multiple attention heads can search for a different definition of relevance or a different correlation. In this way, it is possible to assign activity to each time step that the user has taken when measuring live values from a mobile device. The sequence-to-sequence method is used in the prediction of activities, where all time steps from the transformer output are considered and activity designations are assigned to them. Computation speed, as well as prediction accuracy, are key elements in working with human activities, where prediction can be performed directly on the mobile device. Another advantage of the transformer is the longer path length between features in the time series, which allows for more accurate learning of the context in long time series, an assertion stated by Vaswani et al.

#Human Activity Detection Matlab Code series#

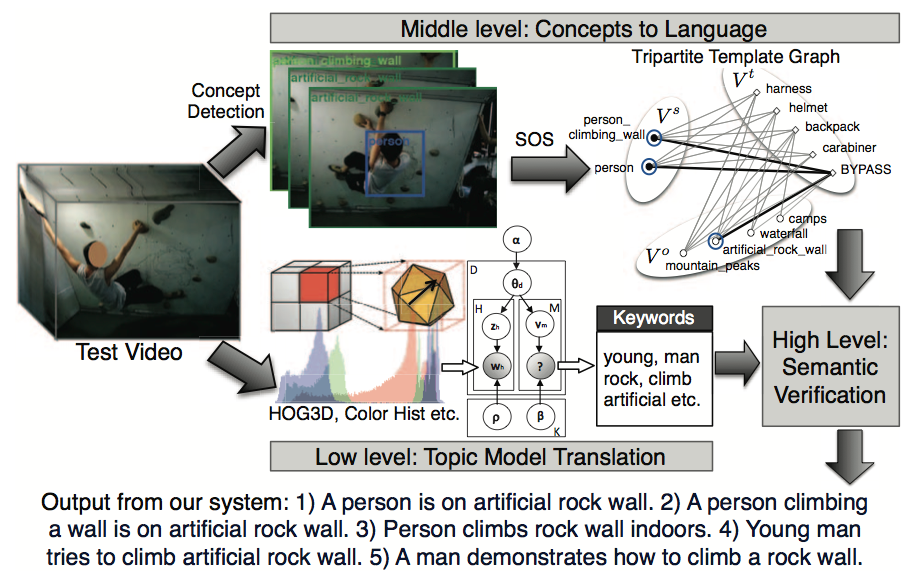

The transformer model directly focuses on using attention mechanisms to find correlations in the time series between features and allows massive parallelization of time series calculations, which is different as compared with recurrent neural networks that iterate serially through a time series. This study employs an alternative approach to processing time series based purely on the attention mechanisms, called transformer. The current manuscript deals with the application of deep neural networks directly to the normalized time series of the signal from the sensors. The transformer model is a universal architecture used similarly to convolutional neural networks in NLP, vision, or signal processing tasks. Two-dimensional convolutional networks work directly on the principle of the image classifier, and thus learn to recognize hidden patterns in signal frames and predict activities from them.

Using attention blocks, the transformer directly focuses on predicting the intensity of the gain/loss of the feature during the feed-forward phase based on the context found during the learning process.

In contrast, the present study focuses on finding advantageous alternatives to classical approaches such as convolutional neural networks or recurrent neural networks. This allowed the signal to be divided into portions of high frequencies representing noise and low frequencies representing real activities. In the case of the J48 decision tree method, Fourier and wavelet transform preprocessing as well as feature acquisition were used. Its input could be arranged into one common frame or, when each sensor was processed separately, the frame was specified by a channel (similarly to the RGB frame, where there were three channels). The merging of data from all axes of the accelerometer and the gyroscope produced a 2D image, which was processed by a 2D convolutional neural network. converted the features in a time series into a grayscale image with shades of gray expressing the measured value from the sensor. The results suggest the expected future relevance of the transformer model for human activity recognition.Īlemayoh et al.

The performance of the proposed adapted transformer method was tested on the largest available public dataset of smartphone motion sensor data covering a wide range of activities, and obtained an average identification accuracy of 99.2% as compared with 89.67% achieved on the same data by a conventional machine learning method. The self-attention mechanism inherent in the transformer, which expresses individual dependencies between signal values within a time series, can match the performance of state-of-the-art convolutional neural networks with long short-term memory. In this study, the transformer model, a deep learning neural network model developed primarily for the natural language processing and vision tasks, was adapted for a time-series analysis of motion signals. Effective classification of real-time activity data is, therefore, actively pursued using various machine learning methods. Readily available data for this purpose can be obtained from the accelerometer and the gyroscope built into everyday smartphones. Computing devices that can recognize various human activities or movements can be used to assist people in healthcare, sports, or human–robot interaction.